It might be nice if the container documentation called out that the MAX_MEM variable and the Java mx setting are the same thing. I've set DSM to Pacific time, but assuming the container doesn't know this. I'm in Pacific, which means 3AM UTC (maintenance time for the CP container) hits at 10PM Pacific, during a time I'm likely using the unit. Minor thing: I noticed that the History timestamps are in UTC. Does CP throttle upload speeds? Even on Windows I don't think I ever got about 7-10Mbps upload. Is this History log stored somewhere so that I could dump it into a spreadsheet and read it more easily? I couldn't figure out how to copy/paste its contents within the container UI. I'm pretty sure I'm past (or very close) to the end of dedup being effective and that I'm uploading new content. There are some recent points, though, where I can see nominal upload at <=500Kbps. Now adding directories that are new to the CP backup and noticing slow upload.Īs I go through the logs History I can see where I updated my selections and dedup caused nominal upload to be very good.Add a few more known complete directories and let them be backed up (dedup).Select some directories known to be completely backed up and let dedup do its thing.Copy all data (which isn't all backed up in CP) to Synology.So far, here's the process I've followed in transitioning from Windows -> Synology. I'll set Java memory down and slowly increase if I get a failure pop-up.

You can look at the history (Tool->History) to have an indication of the "effective" upload rate. So you should be watching the rate at which the backup progress. For example, uploading 1MB could be required to backup 10MB of data. However, this is not a good indication of the speed at which the data is backup. Any other tips to improve this?īecause of deduplication, upload rate is known to be slow.

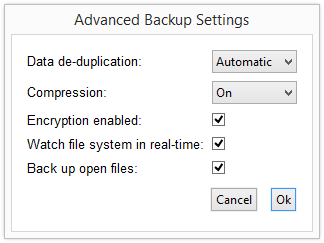

I've set upload bandwidth to unlimited in settings. I watch the DSM bandwidth counter and it's rarely rising above 150KB/s. So as long as you create the container with the same mapping for /config, you can delete and re-create the container without problem. It will probably be less than 6GB.Įverything CrashPlan writes (config, cache, etc) is done under /config in the container ( /volume1/docker/AppData/CrashPlan on the host). Like this, you will be able to find the minimum amount of memory required. If yes, then increase it to 4.5GB and check for crashes. To find the correct amount of memory to set, I would start with 4GB and see if CrashPlan crashes (you get a pop-up window in this case). Since my Syn has 6GB that leaves 0B for the Crashplan container (and any other service). Since my backup is 6TB, code42 would tell me I need to give Java 6GB RAM. restart have increased the inotify as per code42's instructions as well.Īre CRASHPLAN_SRV_MAX_MEM and "java mx, restart" actually independent values?ĬRASHPLAN_SRV_MAX_MEM and the java mx, restart command are controlling the same thing. v /volume1/docker/AppData/CrashPlan:/config:rw Here's how I launched my container for the first docker run -d \

I'm new to Linux and containers and don't understand how I would save my container's setup and config so that re-installing would go more quickly (or perhaps this isn't possible). I understand I have to remove and re-install the container to change SRV_MAX_MEM. Are CRASHPLAN_SRV_MAX_MEM and "java mx, restart" actually independent values? Since my backup is 6TB, code42 would tell me I need to give Java 6GB RAM.Prior to now, I've been uploading for about 16 months without ever 100% completing a backup (6TB). I've gotten my container setup and dedup is helping me quickly catch up to my previous Windows CP installation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed